How You Turn a Know-It-All Into a Specialist

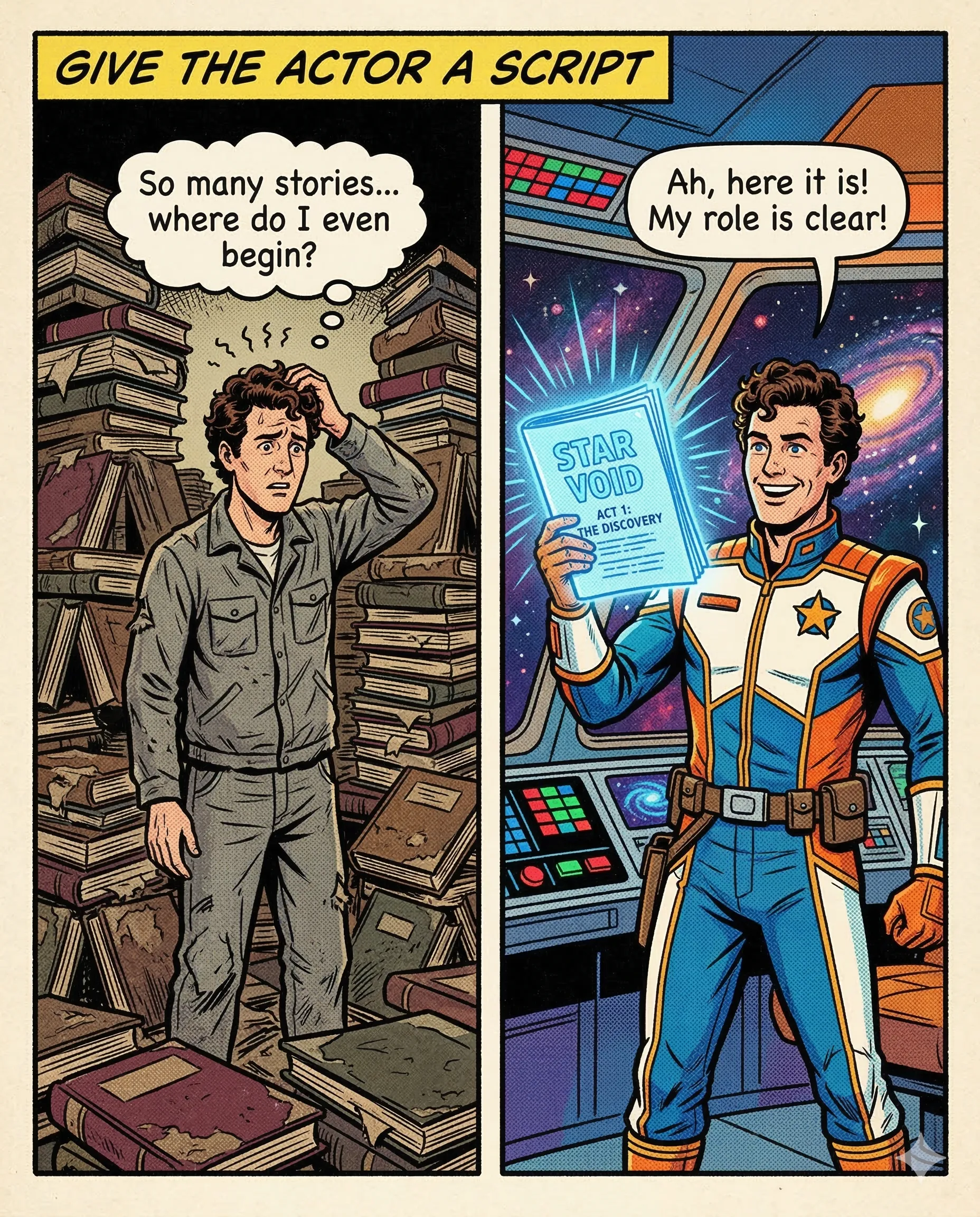

Imagine a brilliant actor who has memorized every classic play in history. This actor is a genius when it becomes time to perform Shakespeare or Miller. However, you want this person to play a specific role in your new movie. The actor has the talent, but they still require a script to learn your specific character’s voice, habits, and unique style.

The script provides the narrow focus that transforms a general talent into a perfect specialist.

This process is called Fine-Tuning. While Augmentation (Post 2) is like giving an AI a library to look at, Fine-Tuning is like changing the AI’s actual personality. We take a “base” model that knows the whole internet and we show it thousands of examples of exactly how we want it to behave.

The mechanism behind this is weight adjustment. Every AI model consists of billions of tiny connections, or weights. During Fine-Tuning, we slightly shift these connections to favor specific patterns. If we show the model enough examples of “friendly assistant” talk, the paths for “rude” or “robotic” responses become harder for the AI to choose. The model eventually learns the “shape” of your data and starts mimicking it automatically.

In practice, this means training an AI to sound exactly like your brand. For example, a travel company fine-tunes a model on its past emails to ensure every new response uses their specific, adventurous tone and mentions their favorite plant-based resort options. The AI stops being a generic robot and starts behaving like a trained employee.

Success happens when the AI understands the “vibe” as well as the facts. You transition from a generalist who knows everything to a specialist who knows exactly how you work.

The Takeaway: a base AI has the knowledge, but a fine-tuned AI has the soul of your business.

Why This Matters for Your AI Product

Fine-tuning is a powerful tool in the AI orchestration kit, but it’s often misunderstood. When building a professional product, consider:

- Style vs. Knowledge: Use fine-tuning to teach the “how” (tone, format, style) and RAG to teach the “what” (current facts, documents, data).

- Data Quality Over Quantity: A few hundred “perfect” examples are more valuable than ten thousand mediocre ones.

- Performance: A smaller, fine-tuned model (like Llama 3 or Mistral) can often outperform a generic giant like GPT-4 on specific, narrow tasks while being significantly faster and cheaper to run.

But which one is right for your internal knowledge? Read our updated guide on RAG vs Fine-Tuning.

AI specialists call it: Fine-Tuning A process that retrains an existing model on a smaller, specific dataset to sharpen its performance on a particular task or style.

Have you ever felt your AI sounded a bit too robotic? What kind of personality would you give it if you could?

Part 5 of 18 | #RAGforHumans