The Border Checkpoint: Why Neurons Need a Threshold

A brain (or an AI) doesn’t just pass every signal along. To be useful, it must decide what matters and what is just noise. It needs a Gatekeeper.

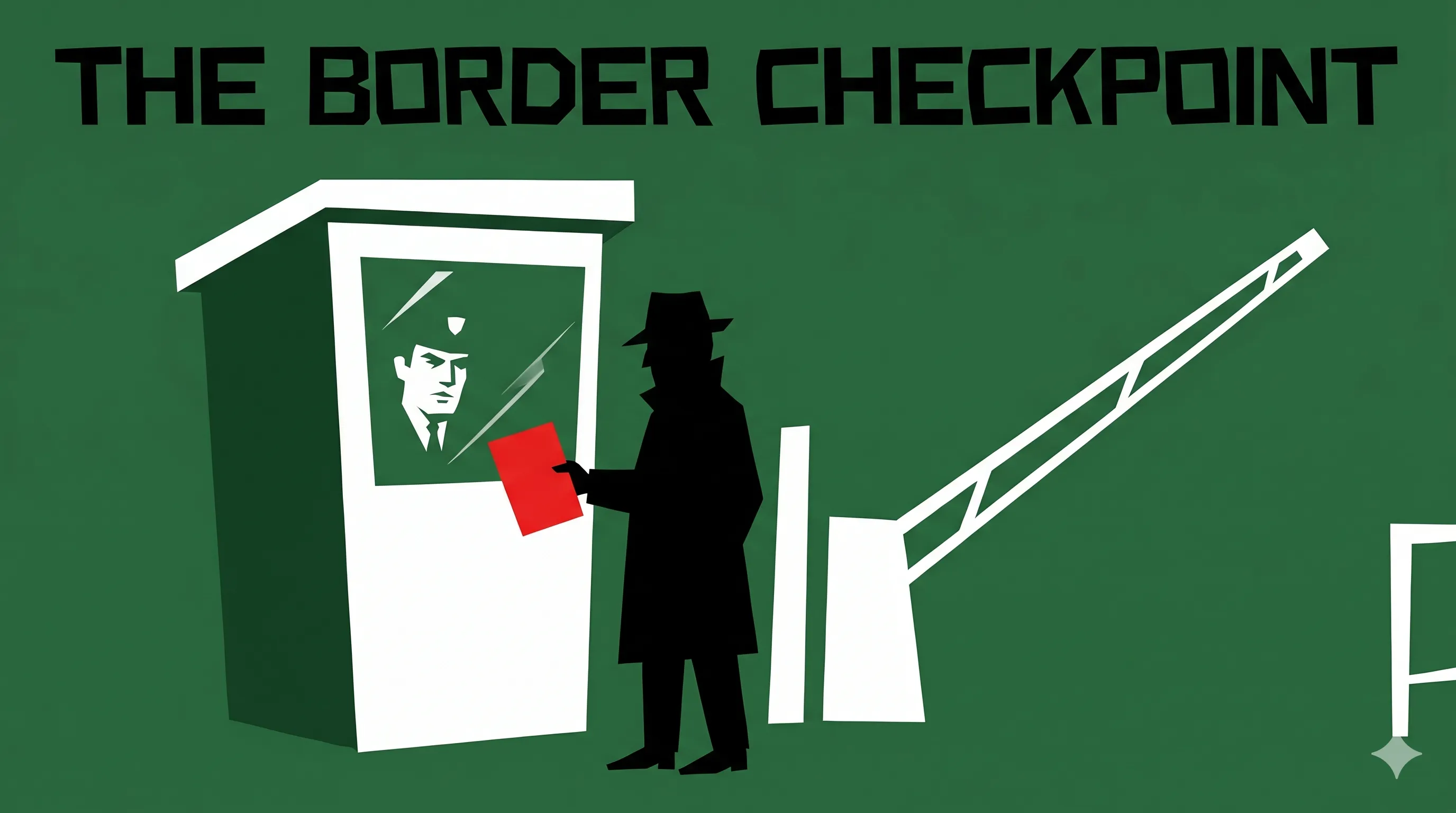

The Scenario

Imagine you are an undercover agent trying to cross a heavily guarded border in a 1960s espionage thriller. To get through, you must present your credentials to the “Border Guard”—a stoic, unmoving figure behind a bulletproof window.

The guard doesn’t care about “mostly valid” papers. They have a strict mental threshold. If your forged passport is only 60% convincing, the guard stays silent and the gate stays closed. You are “Inactive.” But if your floor-work is 90% perfect, the guard suddenly nods, the gate swings open, and you are allowed to pass your information to the other side. You have “Activated.”

This decision-making gatekeeper is the ACTIVATION FUNCTION. It’s the moment where a silent neuron suddenly decides to “Fire” and pass its signal to the next layer.

The Reality

In Deep Learning, an ACTIVATION FUNCTION turns a simple mathematical sum into a meaningful decision.

The most famous one is called ReLU. It stays at zero (Silent) until the input exceeds a certain level, and then it lets the signal through perfectly. Without these functions, a neural network is just a giant linear calculator—it can’t learn complex shapes, recognize faces, or understand the difference between a “Not a Cat” and a “Maybe a Cat.”

Activation functions provide the “Binary” logic that allows a machine to say: “This feature is important—pass it on!”

The Why

Imagine a world without activation. Every tiny whisper of noise would travel through the entire network, overwhelming the system. It would be like a border where anyone who looks “vaguely like a person” is allowed to pass through into the secret lab. Activation functions provide the necessary “Filter” that keeps the AI focused on the signals that actually help it win the game.

The Takeaway

The Activation Function is the threshold that decides if a signal is strong enough to “Fire” the neuron.

AI specialists call it: Activation Function (ReLU, Sigmoid) An Activation Function is a mathematical gate applied to a neuron’s output. It determines whether the neuron’s input is significant enough to contribute to the next layer in the network.

💬 If you were the Border Guard today, would you prefer to be “Lenient” and let everyone through, or “Strict” and risk stopping a friendly agent?

Part 6 (Activation) of 25 | #DeepLearningForHumans