How Much Should AI Be Allowed to Do on Its Own?

Imagine you are teaching a student how to manage a complex project. At first, you perform every task while the student observes. This is the lowest level of autonomy. As the student learns, you allow them to draft the emails, but you still read and approve every single word. Eventually, the student handles the entire project independently, only providing you with a final summary of their success.

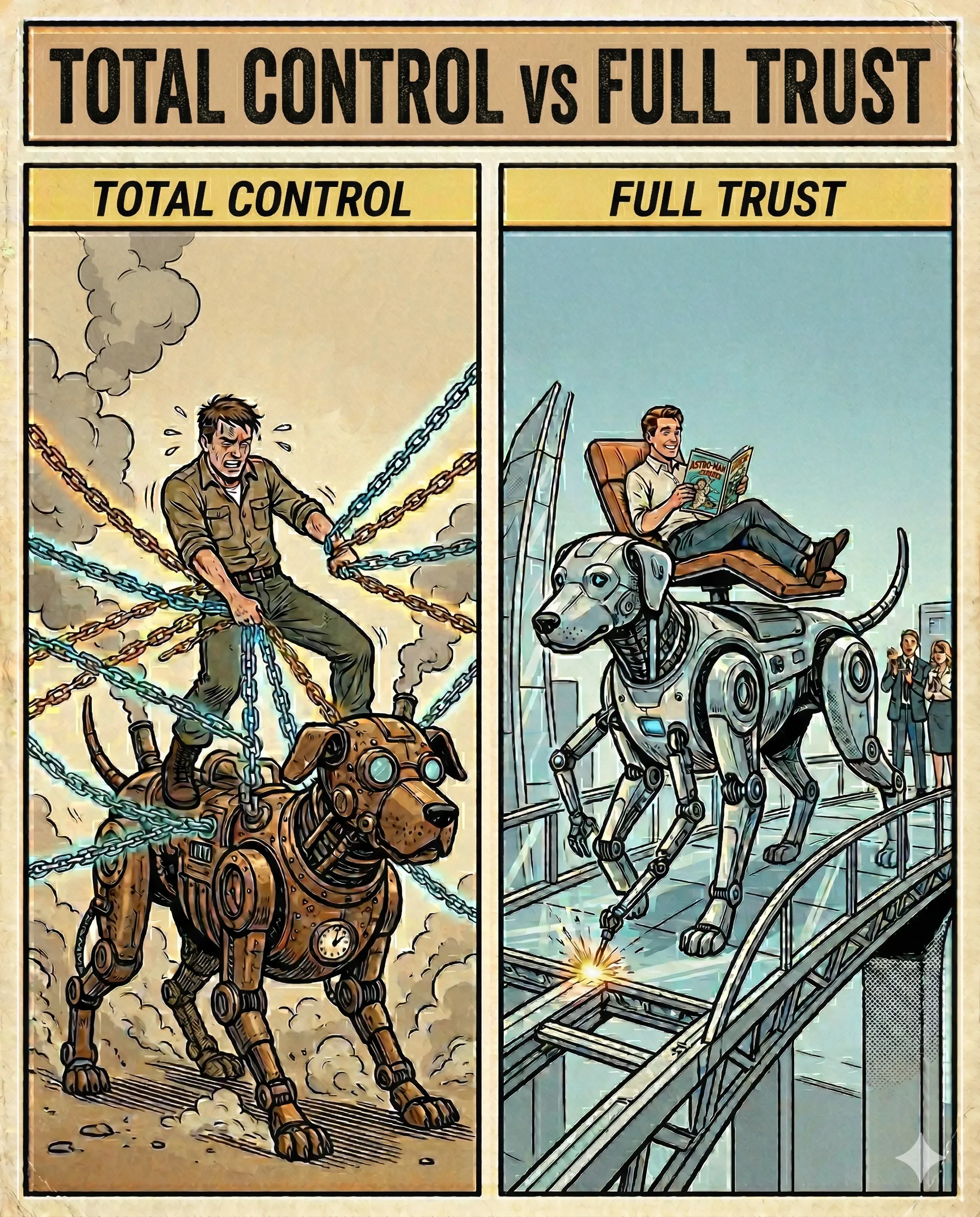

This ladder of responsibility is exactly how we measure the freedom of an AI.

We classify AI autonomy into five distinct levels. Level 1 is a simple tool where the human does all the work. Level 5 is a fully independent agent. The secret to a safe and powerful AI system is finding the perfect level for each task. You want your AI to be “Autonomous enough” to save you time, yet “Aligned enough” to remain under your control.

The mechanism behind this is called the “Human-in-the-Loop” (HITL) system. At lower levels, the AI pauses and asks for a “Human Gatekeeper” to approve an action. At higher levels, the AI uses a “Reasoning Loop” to check its own work against your set rules. It observes its own plan, identifies potential errors, and only proceeds when the logic remains sound.

In practice, this allows you to scale your business with total confidence. For example, a travel planning AI at Level 3 identifies three great hotel options and waits for your choice. At Level 4, you give the AI a budget and a “Yes,” and it proceeds to book the flights, handle the payments, and send the itinerary to your phone automatically. The AI carries the burden of the execution while you retain the power of the decision.

Success happens when the level of trust matches the level of skill. You transition from a manual operator to a high-level strategist.

The Takeaway: a great AI system follows a ladder of trust—moving from an assistant you watch to a partner you trust.

Why This Matters for Your AI Product

Defining the level of autonomy is a core strategic decision when deploying AI into your workflow.

- The Cost of High Autonomy: Level 4 or 5 systems require much higher compute resources and more rigorous testing. They are not always the best choice if a fast, Level 2 assistant can do 80% of the work.

- Safety First: For sensitive domains (law, medical, finance), the mechanism of “Human-in-the-Loop” is mandatory. You want the AI to suggest, but never to finalise.

- Verification is the Work: As autonomy increases, your role shifts from “Doing the work” to “Reviewing the work.” Designing clear verification interfaces is the key to building user trust.

AI specialists call it: Degrees of Autonomy A scale from 1 to 5 that defines how much a system can plan and execute tasks without human intervention.

On a scale of 1 to 5, how much freedom would you give an AI to manage your weekly schedule?

Part 11 of 18 | #RAGforHumans