The Security of the Intelligent Master Key

Imagine you have a beautiful home and you decide to hire a talented decorator. You want them to have access to the living room and the kitchen to do their best work, but you prefer to keep your private study and the safe locked. You give them a “Guest Key” that only opens the doors they need to enter. Your home remains secure while the work proceeds with total freedom.

This is the core of security in the world of connected AI.

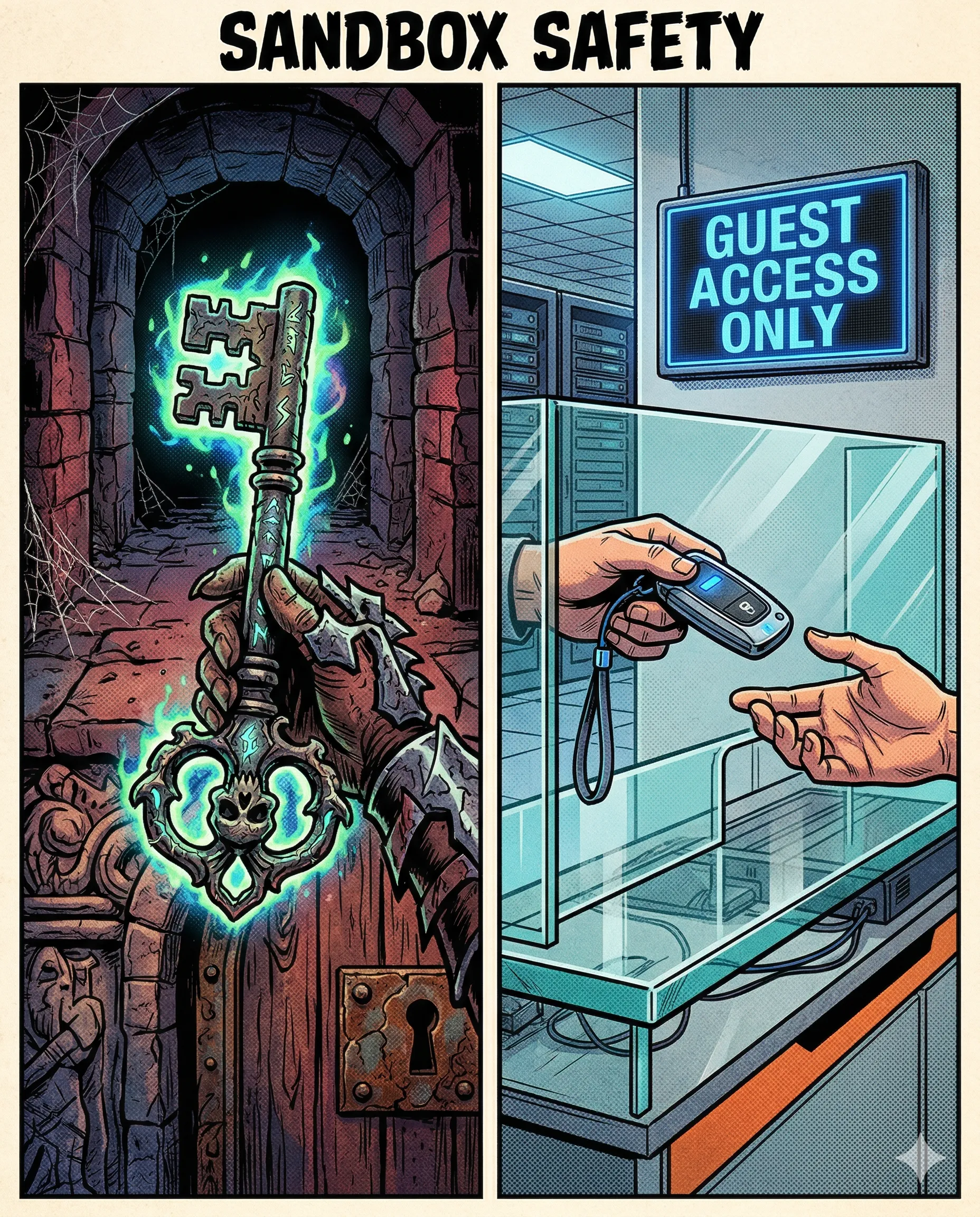

When you plug your AI into your tools using a standard like MCP, you are in total control of the “Gate.” You choose exactly which files and which databases the AI is allowed to see. Instead of a “Master Key” that opens everything, you provide “Fine-Grained Permissions.” This ensures that the AI stays focused on its task within its designated “Sandbox,” leaving the rest of your digital life private and protected.

The mechanism behind this is “Explicit Consent.” The AI must ask for permission every time it wants to access a new tool or a new folder. You act as the “Security Architect,” setting the boundaries before the first line of code is ever read. This creates a “Shielded Workflow” where the AI has all the power it needs to help you, and you have all the peace of mind you require.

In practice, this allows you to collaborate with AI on sensitive projects with full confidence. For example, a financial analyst gives their AI access to the “2025 Budget” folder and the “Expense Tracker” tool. The AI performs deep analysis and finds saving opportunities, yet it remains completely unaware of the private HR files or personal chat logs sitting in the very next folder. The “Glass Wall” remains solid and clear.

Success happens when power and safety work together. You transition from “worrying about access” to “leveraging abilities.”

The Takeaway: a secure AI has the key to the task, while you keep the key to the house.

Why This Matters for Your AI Product

Security isn’t just a checkbox; it’s a value proposition for your users:

- Trust as a Product Feature: Users are terrified of AI “reading everything.” By implementing rigid sandboxing, you turn privacy into a competitive advantage.

- The Principle of Least Privilege: Always give the AI the minimum access required for its current task. If an agent is writing a blog post, it doesn’t need to see your Stripe dashboard.

- Audit Trails: Since secure protocols like MCP require explicit tool calls, you create a perfect log of exactly what the AI looked at and when. This transparency is crucial for enterprise deployments.

AI specialists call it: Sandboxing A security mechanism for separating running programs, ensuring that any AI process stays within its designated boundaries.

If you were setting up a “Sandbox” for your AI assistant today, which single folder would you open first?

Part 13 of 18 | #RAGforHumans